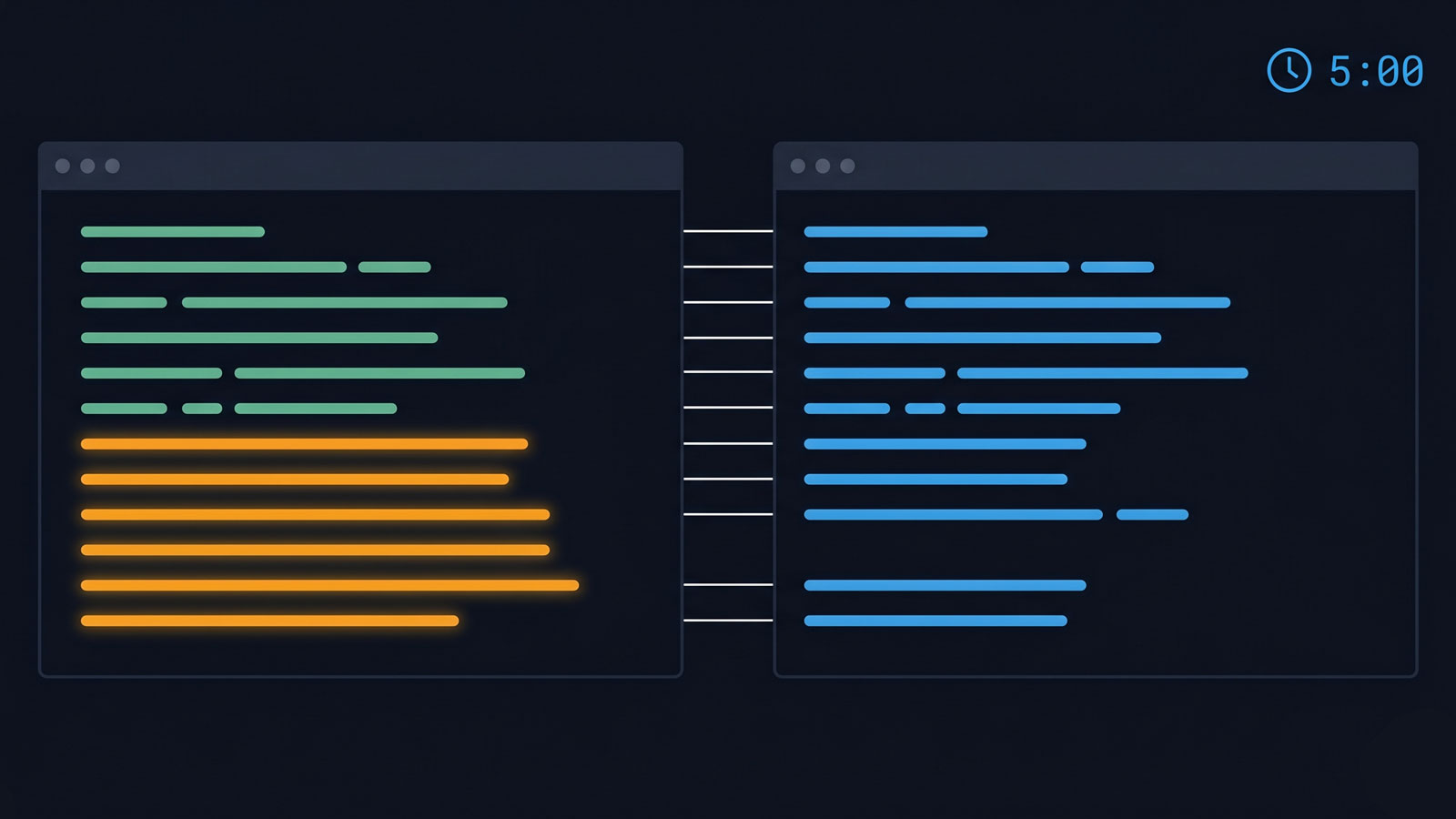

You have a terminal open and five minutes. By minute six you will have a list of routes your test suite has never touched. There will almost certainly be some.

A protected route audit is the process of enumerating all authenticated or role-gated routes in an application and verifying that each route is visited in the test suite by the appropriate user persona. The diff between enumerated routes and tested routes is the route-coverage gap — the set of paths your application serves in production that your tests have never navigated to.

The audit in one paragraph

Enumerate every route your application serves. Enumerate every route your test suite visits. Diff the two lists. The routes in the first list but not the second are your untested routes. Some will be intentional exclusions. Others will be gaps you did not know existed. The audit takes three commands and a one-page diff.

Step 1 — get your route inventory

Your application framework defines routes either by filesystem convention or by explicit declaration. The commands differ by stack, but the principle is the same: extract every route your application can serve in production.

Next.js (App Router):

find app -name 'page.tsx' -o -name 'page.ts' -o -name 'page.jsx' \

| sed 's|/page\.\(tsx\|ts\|jsx\)$||' \

| sed 's|^app||' \

| sort > routes-inventory.txtNext.js (Pages Router):

find pages -name '*.tsx' -o -name '*.ts' -o -name '*.jsx' \

| grep -v '_app\|_document\|_error' \

| sed 's|^pages||; s|\.\(tsx\|ts\|jsx\)$||' \

| sort > routes-inventory.txtAstro:

find src/pages -name '*.astro' -o -name '*.md' -o -name '*.mdx' \

| sed 's|^src/pages||; s|\.\(astro\|md\|mdx\)$||' \

| sort > routes-inventory.txtReact Router (explicit route declarations — you will need to grep your route config):

grep -roh "path=['\"][^'\"]*['\"]" src/ \

| sed "s/path=['\"]//; s/['\"]$//" \

| sort -u > routes-inventory.txtFastAPI / Express (programmatic routes):

# FastAPI

python -c "from main import app; [print(r.path) for r in app.routes]" \

| sort > routes-inventory.txt

# Express (requires a route-listing middleware or manual extraction)

grep -roh "app\.\(get\|post\|put\|delete\)(['\"][^'\"]*['\"])" src/ \

| sed "s/app\.[a-z]*(['\"]//; s/['\"]$//" \

| sort -u > routes-inventory.txtFor the canonical routing documentation, see Next.js routing and Astro routing.

The result is a text file with one route per line. Every line is a path your application serves in production.

Step 2 — get your test inventory

Now extract every route your test suite navigates to. The patterns differ by test framework, but the concept is the same: grep for navigation calls in your test directory.

Playwright (page.goto):

grep -roh "page\.goto(['\"][^'\"]*['\"])" tests/ e2e/ \

| sed "s/page\.goto(['\"]//; s/['\"])$//" \

| sort -u > test-visited-routes.txtCypress (cy.visit):

grep -roh "cy\.visit(['\"][^'\"]*['\"])" cypress/ \

| sed "s/cy\.visit(['\"]//; s/['\"])$//" \

| sort -u > test-visited-routes.txtJest / Supertest (request.get or similar):

grep -roh "request\.\(get\|post\|put\|delete\)(['\"][^'\"]*['\"])" __tests__/ \

| sed "s/request\.[a-z]*(['\"]//; s/['\"]$//" \

| sort -u > test-visited-routes.txtFor Playwright’s page.goto API reference, the docs are the canonical source.

Step 3 — diff the two lists

comm -23 routes-inventory.txt test-visited-routes.txtThe output is every route that exists in your application but has never appeared in a test navigation call. On a typical 50-route SaaS application, this diff usually surfaces between 5 and 15 untested routes.

For larger codebases where the paths don’t align neatly (dynamic segments, parameterised routes, base URL differences), a small script normalises the comparison:

#!/usr/bin/env python3

import re, sys

def normalise(path):

# Strip dynamic segments to their pattern

path = re.sub(r'\[.*?\]', ':param', path)

path = re.sub(r':[\w]+', ':param', path)

return path.rstrip('/')

with open('routes-inventory.txt') as f:

inventory = {normalise(line.strip()) for line in f if line.strip()}

with open('test-visited-routes.txt') as f:

tested = {normalise(line.strip()) for line in f if line.strip()}

untested = sorted(inventory - tested)

for route in untested:

print(route)What the gap typically looks like

The routes that show up in the diff follow a pattern. Admin-only pages are the most common — settings sub-pages, user management, audit logs. These routes exist, they work, and the admin fixture exercises them. But if your suite authenticates as admin and the admin sees them in the navigation, the routes appear tested. They are not tested for other personas.

Error pages are next: 404 handlers, permission-denied pages, post-checkout confirmations, password-reset flows. These routes are often created early, never linked prominently, and forgotten by the time the test suite matures.

Then there are archived features. A route was built for a feature that shipped, the feature was later hidden behind a feature flag, the route was never removed. It still responds. It still renders. It is not in the test suite, not in the navigation, and not in anyone’s mental model of the application.

Triaging the gap

Not every untested route is equally urgent. Prioritise by risk:

Security-sensitive routes first. Any route that serves authenticated content or performs state-changing operations must have test coverage. If it accepts POST requests or renders user-specific data, it is a priority regardless of how rarely it is visited.

Billing and conversion flows second. Routes involved in signup, upgrade, checkout, and invoice rendering are revenue-critical. An untested billing page that breaks silently is a revenue leak.

UX-significant routes third. Features your users rely on but that your suite has never navigated to. These are the routes that generate support tickets when they break — because nobody tested them, and nobody noticed until a customer reported the problem.

Intentional exclusions last. Routes that are legitimately excluded from the suite — health check endpoints, internal debugging pages, routes behind permanent feature flags. Document these as expected gaps so they do not surface as false positives on the next audit.

Why this matters more than coverage percentage

Code coverage measures line execution, not route reachability. A suite can achieve 90% line coverage while leaving 15 routes untouched, because covered code paths run through shared components exercised by other tests.

Code coverage is a measure of line execution, not route reachability. A suite can achieve 90% line coverage while leaving 15 routes untouched — because the covered code paths run through shared components, utilities, and middleware that are exercised by other tests.

The route-level diff measures something different: whether the test suite has ever visited a given route in the way a user would visit it. A route that is never navigated to in a test has never had its rendering, its authentication check, or its navigation links verified. It could be broken, inaccessible, or entirely absent from the user’s navigation — and the suite would say nothing. CVE-2025-29927 was the security-shaped version of exactly this gap: routes the suite reached through middleware, but never tested independently.

The diff is not a replacement for code coverage. It is a complement. Coverage tells you how much code ran. The diff tells you how much of your application the suite has actually seen.

When to schedule the audit

Run the audit before every major release — it takes five minutes and catches the most obvious gaps. Run it monthly if your team ships features weekly, because new routes accumulate faster than test coverage does. Run it after every framework upgrade, because routing changes can silently alter which paths resolve and which return 404. For the systematic, multi-persona version that diffs the navigation graph per role, see the persona-aware Playwright fixture guide.

The five-minute audit catches the obvious gaps. For the systematic, persona-aware version, a Navigation Coverage measurement runs the same diff continuously across every persona.

Frequently asked questions

How do you enumerate all routes in a Next.js application from the command line?

For App Router: find app -name 'page.tsx' -o -name 'page.ts' | sed 's|/page\.\(tsx\|ts\)$||' | sed 's|^app||' | sort. For Pages Router: find pages -name '*.tsx' -o -name '*.ts' | grep -v '_app\|_document\|_error' | sed 's|^pages||; s|\.\(tsx\|ts\)$||' | sort. Each line in the output is a route your application serves in production.

How do you find routes not covered by your test suite?

Extract all routes your tests navigate to (grep for page.goto or cy.visit patterns in your test directory), then diff against your route inventory using comm -23 routes-inventory.txt test-visited-routes.txt. The output is every production route your suite has never visited.

What types of routes typically show up as untested? Admin-only pages, post-checkout confirmation flows, error boundary routes, settings sub-pages, and features that were added after the initial test suite was written. On a typical 50-route application, the diff usually surfaces between 5 and 15 untested routes.

How often should you run a protected route audit? Before every major release, monthly if your team ships features weekly, and after every framework upgrade. New routes accumulate faster than test coverage does, and framework upgrades can silently alter which paths resolve.